Security for AI Agents

Govern Agents the Same Way You Govern Humans

AI systems are requesting access, acting on behalf of users, and operating with credentials your security team never approved. Every new agent is an identity — with permissions, entitlements, and risk — but most organizations govern them with the same ad-hoc processes they use for service accounts: shared credentials, static roles, and no audit trail. Opal brings agent identities under the same policy framework, auditability, and enforcement as human identities, so your least-privilege posture doesn't have a blind spot shaped like an LLM.

Security for AI Agents

Govern Agents the Same Way You Govern Humans

AI systems are requesting access, acting on behalf of users, and operating with credentials your security team never approved. Every new agent is an identity — with permissions, entitlements, and risk — but most organizations govern them with the same ad-hoc processes they use for service accounts: shared credentials, static roles, and no audit trail. Opal brings agent identities under the same policy framework, auditability, and enforcement as human identities, so your least-privilege posture doesn't have a blind spot shaped like an LLM.

Security for AI Agents

Govern Agents the Same Way You Govern Humans

AI systems are requesting access, acting on behalf of users, and operating with credentials your security team never approved. Every new agent is an identity — with permissions, entitlements, and risk — but most organizations govern them with the same ad-hoc processes they use for service accounts: shared credentials, static roles, and no audit trail. Opal brings agent identities under the same policy framework, auditability, and enforcement as human identities, so your least-privilege posture doesn't have a blind spot shaped like an LLM.

Security for AI Agents

Govern Agents the Same Way You Govern Humans

AI systems are requesting access, acting on behalf of users, and operating with credentials your security team never approved. Every new agent is an identity — with permissions, entitlements, and risk — but most organizations govern them with the same ad-hoc processes they use for service accounts: shared credentials, static roles, and no audit trail. Opal brings agent identities under the same policy framework, auditability, and enforcement as human identities, so your least-privilege posture doesn't have a blind spot shaped like an LLM.

Get a Demo

See the Platform

TRUSTED BY LEADING COMPANIES

TRUSTED BY LEADING COMPANIES

TRUSTED BY LEADING COMPANIES

TRUSTED BY LEADING COMPANIES

The Problem

Your Identity Security Has a Blind Spot, and It's Growing Fast

AI agents are proliferating across the enterprise. Coding assistants write and deploy infrastructure. Workflow automations query databases and modify records. Customer-facing bots access production systems on behalf of users. Each one operates with credentials, requests access to resources, and acts with the authority of the identity it's been assigned. But almost none of them are governed the way human identities are. There's no JIT enforcement. No approval chain. No peer analysis. No audit trail that ties a specific action to a specific policy. The same security teams that spent years building least-privilege postures for human access are watching a new class of identity bypass it entirely.

Ungoverned by default

Most AI agents operate with static credentials and standing access — exactly the risk profile JIT was built to eliminate

No policy parity

Human access goes through approval chains and reviews; agent access is provisioned once and forgotten

Invisible to the graph

Agent identities often aren't represented in the access graph at all — making them impossible to query, audit, or revoke

The Problem

Your Identity Security Has a Blind Spot, and It's Growing Fast

AI agents are proliferating across the enterprise. Coding assistants write and deploy infrastructure. Workflow automations query databases and modify records. Customer-facing bots access production systems on behalf of users. Each one operates with credentials, requests access to resources, and acts with the authority of the identity it's been assigned. But almost none of them are governed the way human identities are. There's no JIT enforcement. No approval chain. No peer analysis. No audit trail that ties a specific action to a specific policy. The same security teams that spent years building least-privilege postures for human access are watching a new class of identity bypass it entirely.

Ungoverned by default

Most AI agents operate with static credentials and standing access — exactly the risk profile JIT was built to eliminate

No policy parity

Human access goes through approval chains and reviews; agent access is provisioned once and forgotten

Invisible to the graph

Agent identities often aren't represented in the access graph at all — making them impossible to query, audit, or revoke

The Problem

Your Identity Security Has a Blind Spot, and It's Growing Fast

AI agents are proliferating across the enterprise. Coding assistants write and deploy infrastructure. Workflow automations query databases and modify records. Customer-facing bots access production systems on behalf of users. Each one operates with credentials, requests access to resources, and acts with the authority of the identity it's been assigned. But almost none of them are governed the way human identities are. There's no JIT enforcement. No approval chain. No peer analysis. No audit trail that ties a specific action to a specific policy. The same security teams that spent years building least-privilege postures for human access are watching a new class of identity bypass it entirely.

Ungoverned by default

Most AI agents operate with static credentials and standing access — exactly the risk profile JIT was built to eliminate

No policy parity

Human access goes through approval chains and reviews; agent access is provisioned once and forgotten

Invisible to the graph

Agent identities often aren't represented in the access graph at all — making them impossible to query, audit, or revoke

The Problem

Your Identity Security Has a Blind Spot, and It's Growing Fast

AI agents are proliferating across the enterprise. Coding assistants write and deploy infrastructure. Workflow automations query databases and modify records. Customer-facing bots access production systems on behalf of users. Each one operates with credentials, requests access to resources, and acts with the authority of the identity it's been assigned. But almost none of them are governed the way human identities are. There's no JIT enforcement. No approval chain. No peer analysis. No audit trail that ties a specific action to a specific policy. The same security teams that spent years building least-privilege postures for human access are watching a new class of identity bypass it entirely.

Ungoverned by default

Most AI agents operate with static credentials and standing access — exactly the risk profile JIT was built to eliminate

No policy parity

Human access goes through approval chains and reviews; agent access is provisioned once and forgotten

Invisible to the graph

Agent identities often aren't represented in the access graph at all — making them impossible to query, audit, or revoke

How Opal Solves It

One Framework for Every Identity Type

Opal doesn't treat agent identities as a special case. They're first-class entities in the same access graph, subject to the same OpalScript policies, evaluated by the same Paladin engine, and queryable through the same OpalQuery interface as human identities. When an AI agent requests access, it goes through the same approval chain — with the same contextual evaluation, time-bound enforcement, and audit trail — as a request from any employee. The security posture you've built for humans extends to agents automatically, not as a bolt-on.

Key Capabilities

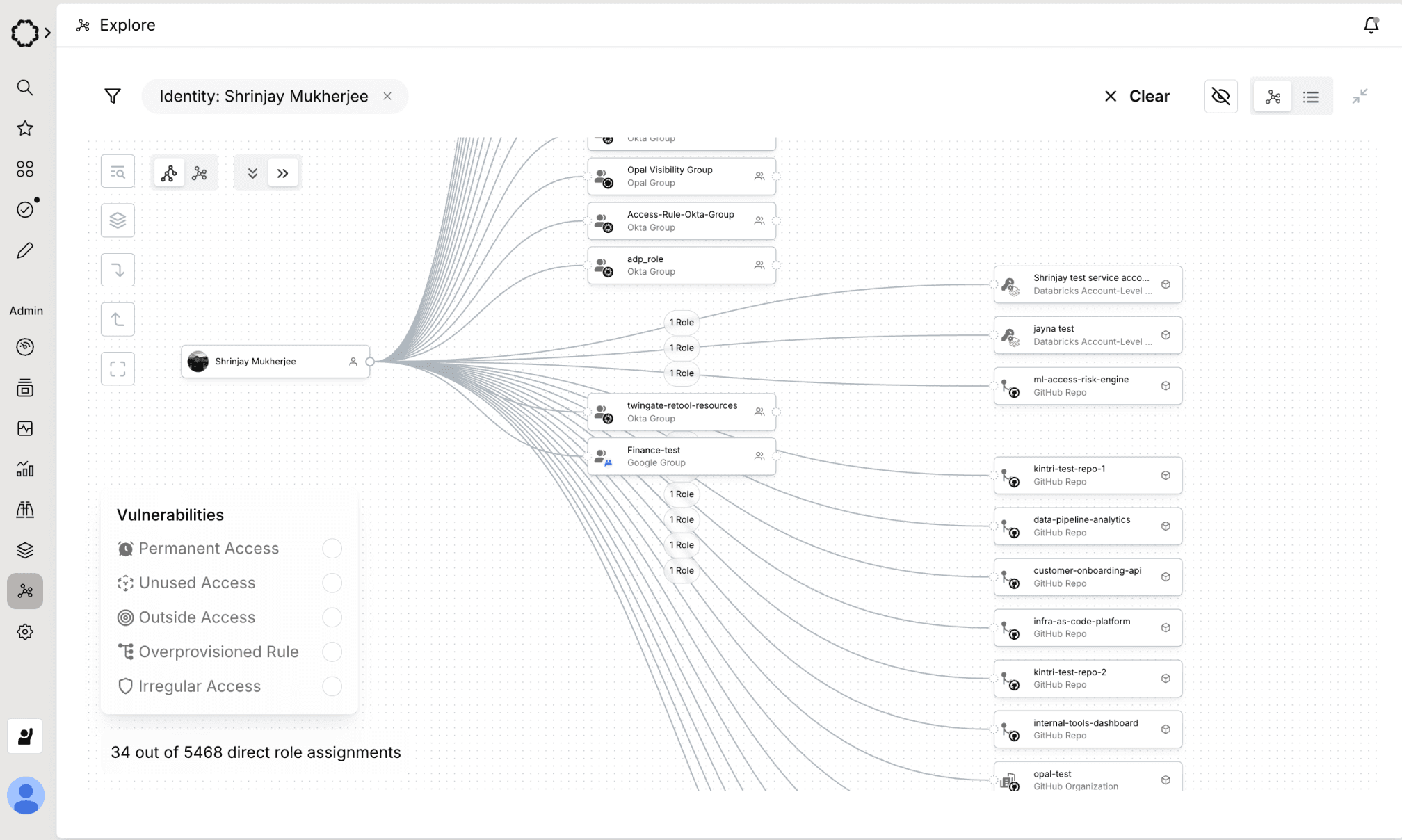

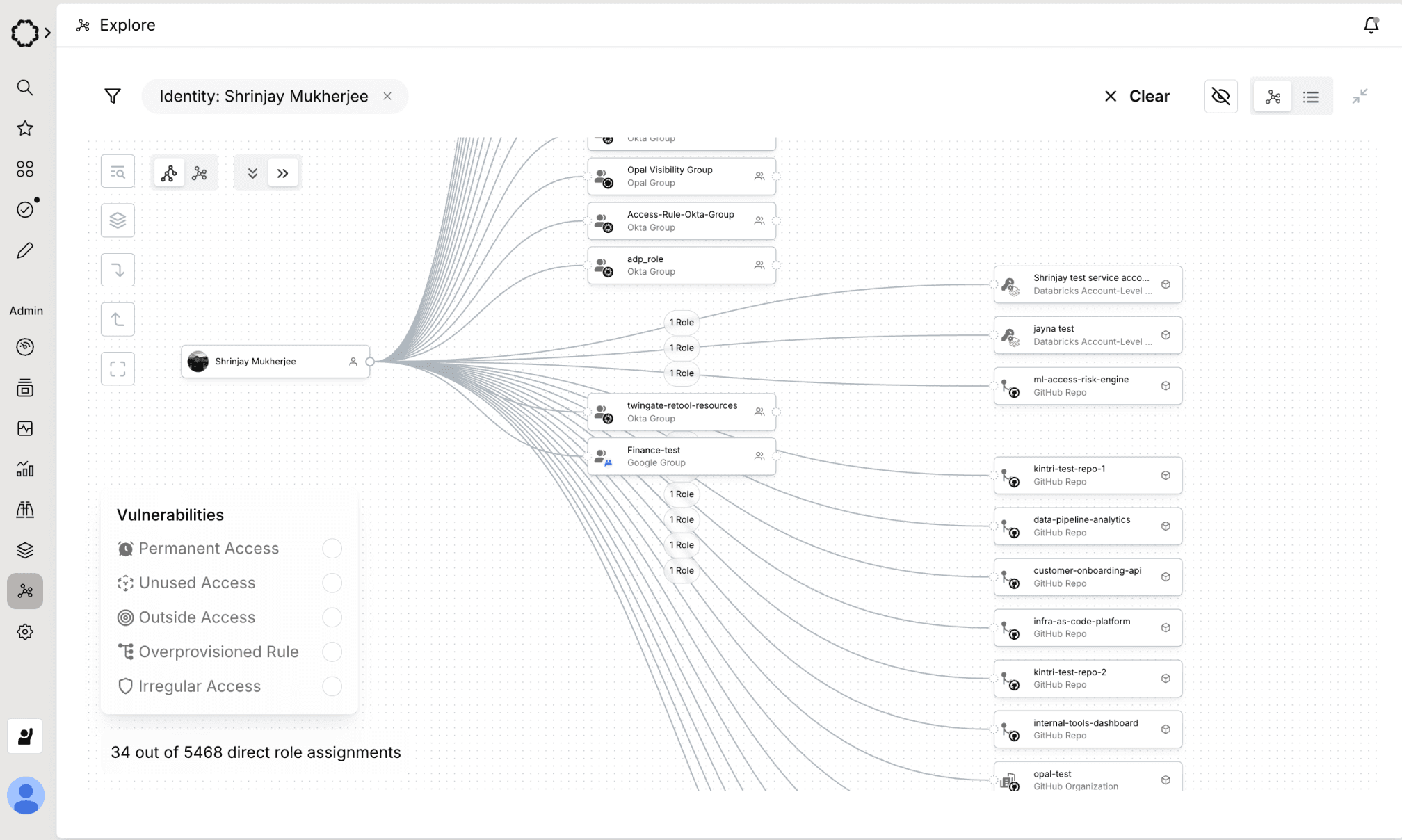

1. Agent Identities in the Access Graph

A Python-like language for encoding access policy as executable automations. Define approval workflows, JIT rules, SoD constraints, and break-glass logic in code that's version-controlled, testable, peer-reviewed, and composable. Write it by hand, or describe what you need and let AI generate it. Ships through Git, Terraform, and CI/CD — just like the rest of your infrastructure.

1. Agent Identities in the Access Graph

A Python-like language for encoding access policy as executable automations. Define approval workflows, JIT rules, SoD constraints, and break-glass logic in code that's version-controlled, testable, peer-reviewed, and composable. Write it by hand, or describe what you need and let AI generate it. Ships through Git, Terraform, and CI/CD — just like the rest of your infrastructure.

2. Policy Parity with OpalScript

The OpalScript policies you write for human access apply to agent identities without modification. JIT rules, approval workflows, SoD constraints, duration caps, and break-glass procedures govern agent access the same way they govern human access. Need different rules for agents? Write them — the same language, the same version control, the same deployment pipeline. Agent-specific policies like credential scopin

2. Policy Parity with OpalScript

The OpalScript policies you write for human access apply to agent identities without modification. JIT rules, approval workflows, SoD constraints, duration caps, and break-glass procedures govern agent access the same way they govern human access. Need different rules for agents? Write them — the same language, the same version control, the same deployment pipeline. Agent-specific policies like credential scopin

3. Paladin Evaluates Agent Requests

When an AI agent requests access, Paladin evaluates it with the same multi-signal investigation it applies to human requests — identity context, access history, resource sensitivity, policy compliance, and justification quality. Agents that request access with sufficient context and within policy bounds are approved. Agents that request sensitive access without adequate justification are escalated to a human reviewer with Paladin's reasoning attached. No rubber-stamping. No silent provisioning.

3. Paladin Evaluates Agent Requests

When an AI agent requests access, Paladin evaluates it with the same multi-signal investigation it applies to human requests — identity context, access history, resource sensitivity, policy compliance, and justification quality. Agents that request access with sufficient context and within policy bounds are approved. Agents that request sensitive access without adequate justification are escalated to a human reviewer with Paladin's reasoning attached. No rubber-stamping. No silent provisioning.

4. Time-Bound Agent Access by Default

Standing access for AI agents is the same risk as standing access for humans — arguably worse, because agents operate at machine speed and don't take vacations. Opal enforces JIT and time-bound access for agent identities by default. Credentials are scoped to a task. Access expires on completion. Long-running agents are subject to periodic re-evaluation. The attack surface from a compromised agent credential is bounded by the same duration and scope policies that govern human access.

4. Time-Bound Agent Access by Default

Standing access for AI agents is the same risk as standing access for humans — arguably worse, because agents operate at machine speed and don't take vacations. Opal enforces JIT and time-bound access for agent identities by default. Credentials are scoped to a task. Access expires on completion. Long-running agents are subject to periodic re-evaluation. The attack surface from a compromised agent credential is bounded by the same duration and scope policies that govern human access.

Impact

Trusted by security teams that ship fast and sleep well.

86K

Time-bound access requests

JIT Access and UARs Enhance Productivity and Security at Databricks

See customer story

Trusted by security teams that ship fast and sleep well.

86K

Time-bound access requests

JIT Access and UARs Enhance Productivity and Security at Databricks

See customer story

Trusted by security teams that ship fast and sleep well.

86K

Time-bound access requests

JIT Access and UARs Enhance Productivity and Security at Databricks

See customer story

5,353

Okta entitlements governed

How Mercari Built Zero-Touch Access to Production

See customer story

5,000

Employees secured

Blend Transforms Identity Security with Deterministic Logic

See customer story

150+

Apps under governance

Superhuman Reduced Access Risk While Improving End-User Experience

See customer story

Trusted by security teams that ship fast and sleep well.

86K

Time-bound access requests

JIT Access and UARs Enhance Productivity and Security at Databricks

See customer story

The Next Identity Crisis Is Already Here. Govern It Now.

AI agents are the fastest-growing identity type in your environment — and the least governed. Opal extends the same programmable, auditable, enforceable security posture you've built for humans to every agent identity in your organization. No blind spots. No bolt-ons. No second-class identities. Track and manage your employees usage of Anthropic, OpenAI, and Devin platforms today.

The Next Identity Crisis Is Already Here. Govern It Now.

AI agents are the fastest-growing identity type in your environment — and the least governed. Opal extends the same programmable, auditable, enforceable security posture you've built for humans to every agent identity in your organization. No blind spots. No bolt-ons. No second-class identities. Track and manage your employees usage of Anthropic, OpenAI, and Devin platforms today.

The Next Identity Crisis Is Already Here. Govern It Now.

AI agents are the fastest-growing identity type in your environment — and the least governed. Opal extends the same programmable, auditable, enforceable security posture you've built for humans to every agent identity in your organization. No blind spots. No bolt-ons. No second-class identities. Track and manage your employees usage of Anthropic, OpenAI, and Devin platforms today.

The Next Identity Crisis Is Already Here. Govern It Now.

AI agents are the fastest-growing identity type in your environment — and the least governed. Opal extends the same programmable, auditable, enforceable security posture you've built for humans to every agent identity in your organization. No blind spots. No bolt-ons. No second-class identities. Track and manage your employees usage of Anthropic, OpenAI, and Devin platforms today.

Stop Reviewing.

Start Enforcing.

Stop Reviewing.

Start Enforcing.

Stop Reviewing.

Start Enforcing.

Stop Reviewing.

Start Enforcing.